Trends in Linked Open Data

Two days in Venice offered a wealth of insights into Linked Open Data practice

The Georgian Papers Programme (GPP) is an ambitious transatlantic project contributed to by King's College London, Royal Collections Trust, College of William and Mary, Omohundro Institute, the Sons of the American Revolution, the Mount Vernon Ladies' Association. King's Digital Lab, where I am hosted as GPP Metadata Analyst, is providing technical strategy and developing a proof of concept digital humanities tool. The rich content derives from the Royal Archives’ holdings of the papers of the Hanoverian kings and their courts.

As the Metadata Analyst for GPP, my role is to discover how best to describe this material to ensure that it is fully searchable (using either UK or US, modern or historical spelling;)and that the methods of description conform to best practice. I was pleased to be offered the chance by Geoff Browell of KCL Archives to attend the LODLAM Summit 2017 which was held in Venice, 28-29 June. The summit offers opportunities for discussion and networking for those within the Linked Open Data (LOD) community working with and within Libraries, Archives and Museums. But the format for the summit was something new to me - an unconference?

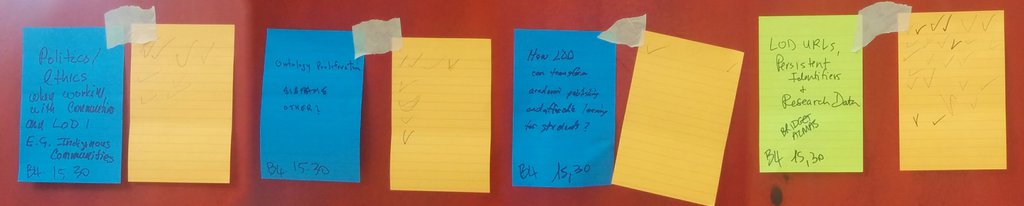

Voting for "unconference" sessions

An unconference has no set topics for sessions; instead topics are offered in short pitches by potential session leaders to the attendees who then vote on which sessions they would like to see happen. This flexibility allows for those discussions which are of most interest to go ahead, but did sometimes lead to missed opportunities to attend sessions of interest because as many as seven sessions could be run concurrently! Regardless, the summit offered me the chance to engage with LOD practitioners in meaningful discussions and many of the strands have shaped my thinking around the GPP.

In that spirit, what follows is part reportage to direct the reader to some of exciting initiatives in LOD, and part reflection on state of LOD today.

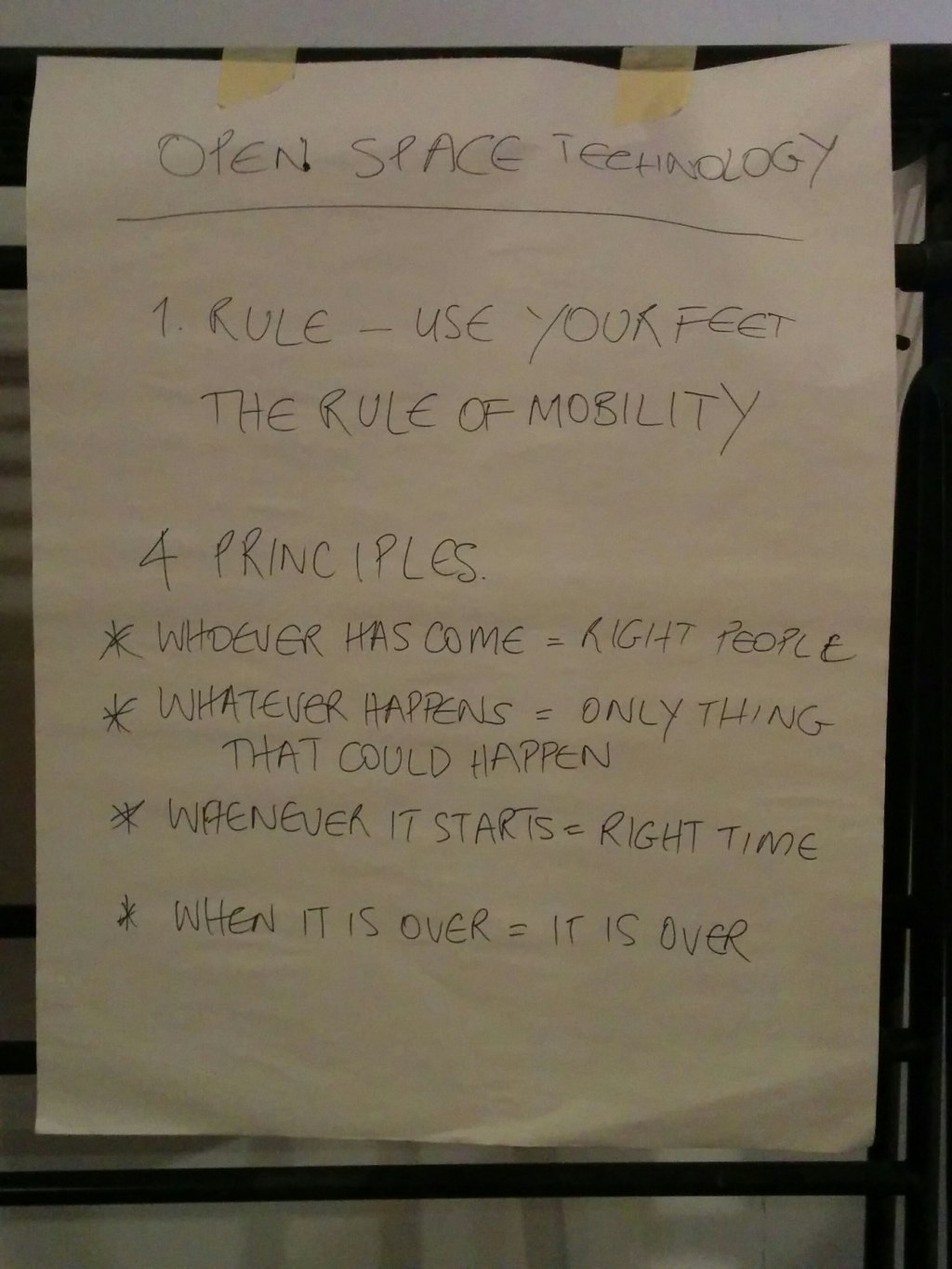

We opened with Pasquale Gagliardi, the Secretary General of the Cini Foundation (Fondazione Giorgio Cini), who warmly welcomed delegates to the foundation and discussed the work they are doing around opening up access to their own archives and graphic collections. Ingrid Mason, Deployment Strategist at AARNet, set the foundation for the conference specifically around the concept of OpenSpace technology.

Open Space Technology - a pragmatic philosophy!

The first of the sessions I opted to attend concerned Schema.org (where did it come from?), and the proposal for an archives extension. Led by Richard Wallis, founder of Data Liberate, these sessions were very informative and I look forward to evaluating Schema.org as a possible metadata standard to use within the GPP. A particular point of interest for me from these two sessions was the huge uptake by website creators (12 million domains as of 2015) despite an uncertainty that it impacts on website usage due to the opacity of search engine algorithms. Schema.org is available in a number of metadata flavours too (microdata, RDFa, JSON-LD) to better fit into whatever your current LOD practice might be.

Lunch held in the Cypress Cloister (Buora)

Following a very sunny lunch the five finalists for the LODLAM challenge presented their projects (winners for the two prizes were voted on and awarded on Thursday 29th).

All 5 were excellent projects, but whilst DIVE+ was a deserved ultimate winner of the grand prize, I particularly liked WarSampo for it’s straightforward approach of using existing datasets to provide a combined resource of great interest to the public. War history, especially around WWI and WWII, remains a topic that still impacts widely on the wider Western communities that were involved and excites the imagination. The attraction of LOD to academic communities seems clear but exposing what it can do to a wider community is to be applauded and encouraged.

(Also checkout Fishing in the data ocean, Linked Data Service Platform for Chinese Genealogy (Shanghai Library) Oslo Public Library if you get a chance!)

The two sessions I opted for in the afternoon were around ULAN and the possibility of reconfiguring how it is modeled, led by Joan Cobb from the Getty, and then a compelling session around politics and ethics in LOD lead by Stacy Allison-Cassin from York University in Canada. This session resonated with me in terms of previous work I have undertaken relating to digitisation of indigenous knowledge and the sensitivities involved, so I was not surprised to find two other kiwis (Adam Moriarty from Auckland War Memorial Museum and Fenella France from the Library of Congress) in this discussion as these issues come up time and time again within the Aotearoa/New Zealand context.

Questions around ethics in LOD practice were discussed, specifically around data about or produced by indigenous peoples but also more generally too which was surprising but very welcome. Indigenous approaches to knowledge (and I must be clear here that there is no one unifying approach even within one collective group of people, for example, Māori) are often more protective of access and how knowledge is encoded in comparison to a more widely accepted Western model of “open knowledge for all.”

In many places, indigenous peoples hold sacred knowledge sacrosanct and discourage sharing among the wider group, let alone beyond it. In terms of data that may suit the creation of a LOD dataset, names are often a common access point. Within an indigenous context this is often fraught: personal names and who defines their spelling, format and use; geographic names when many groups might hold conflicting names for the same geographic place and these conflicting names might be tied to land claims; or, tribal names when the name by which a group may know another is considered derogatory by that group. The appearance of a personal name, when it belongs to someone who has died, can also be inappropriate amongst some groups. Ownership issues around data are not uncommon and due to experiences with exploitative practices of outsider groups such as government, academics (especially within an anthropological setting as well as relating to medical research), and businesses, many indigenous groups are suspicious of the activities of those from these groups.

Against a backdrop of Venetian thunderstorms, the first afternoon culminated in short format presentations, quirkily billed as “Dork Shorts”, of various projects; discussions around what participants are working on in the LOD world. One covered W3C publishing best practice for publishing data on the web, a timely reminder to consider not just the shape of the data you’re exposing but the process by which you are making it available. Another attendee highlighted the importance of applying for UI/UX in grant applications for LOD work which should be an important part of any digital humanities project.

The following day I attended sessions on Wikidata (lead by Simon Cobb, Leeds University Library), LOD and research (lead by Francesca Tomasi, University of Bologna), and LOD and affordable learning (lead by Mark Bilby, California State University Fullerton).

Part of the discussion around Wikidata was how editors could work collaboratively to improve the resource. Mechanisms to facilitate communication were debated but no firm agreement for a preferred method was reached. Campaigns to improve Wikidata around particular themes or people were mooted and Katherine Thornton from Yale University Library talked about a “one edit a day for 100 days” challenge. Despite the challenges presented amongst the LOD community, Wikidata is an attractive dataset due to its openness and frequent linking to other LOD giants such as VIAF and Geonames. The opportunity to harness the processing power of family historians and other highly motivated interest groups on a platform that doesn’t require maintaining in-house is a very appealing feature.

The space for crowdsourcing is now well-established, notably with wikidata as a LOD platform to which many of the sessions on the second day of conference were devoted. The size of datasets being produced indicates that, for accuracy, a much larger pool than the producing research group and associated students is required to process the data when machine processing is not up to scratch.

In the final two sessions, there were discussions around:

- Modelling data - specifically that a complex model does not necessarily mean a complicated one, and the ability to capture and expose data provenance was highlighted to assist users in evaluating the reliability and authority of data and data providers

- LOD and affordable learning; specifically how LOD could be used to create cheaper or free open learning resources for tertiary students. The sometimes exorbitant costs of textbooks, even in electronic form, continues to be a barrier for some students to fully engage with the classwork assigned within their course

From my perspective it seems clear that LOD is now a well-entrenched practice, and researchers are exposing their data as a matter of course. Yet the challenges around which metadata format standard, LOD standard, model, and other datasets to use, to ensure that the datasets produced continue to be fit for purpose persist. Also, there is the question of "what happens next?" For instance, during a session which was focussed on discussing what DH tools exist to make use of the data that LOD communities produce, much of the session was devoted to the possible tools that people wish they had rather than the tools that currently exist. So it’s timely that as a LOD practitioner, I’m now embedded in KDL: a team with aspirations to develop those nascent tools. I’m looking forward to contributing to to their evolution.

Sam Callaghan, July 2017